Every large sourcing event is now a team event, as we wrote about previously in “Procurement Is a Team Sport,” quoting Gartner:

“The typical buying group for a complex B2B solution involves six to 10 decision makers, each armed with four or five pieces of information they’ve gathered independently and must deconflict with the group. At the same time, the set of options and solutions buying groups can consider is expanding as new technologies, products, suppliers and services emerge.

“These dynamics make it increasingly difficult for customers to make purchases. In fact, more than three-quarters of the customers Gartner surveyed described their purchase as very complex or difficult.”

There is an elegant, Nobel Prize-winning theorem in economics called Arrow’s Impossibility Theorem. Kenneth Arrow’s doctoral thesis addressed the dynamics involved in ranked voting.

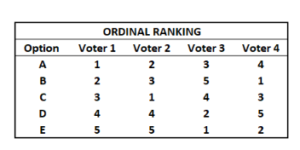

Consider a set of voters evaluating a set of different proposals. Each individual comes to the table with his or her preferred ranking of the options. There is no value associated with the ranking as in a cardinal ranking. Individuals list the options in their preferred order. The objective is to convert these individual views into a group consensus that is deemed to be fair.

This sounds just like a committee that seeks to rank order different supplier proposals when running a strategic sourcing event.

Arrow defines what “fair” means:

- If everyone prefers proposal X to proposal Y, then the group consensus must do so, as well

- If everyone prefers X to Y and this does not change over time, then the group consensus must also prefer X to Y even if the views of individuals regarding other pairs such as W vs Z change over time.

- There is no single individual who can impose their choice on the group; there must be an acceptable consensus.

These sound like reasonable conditions for describing what is fair. He did it this way to spell them out mathematically.

So what did Arrow conclude?

Arrow’s Impossibility Theorem: When members of the group have three or more options, it is impossible to convert the preferences of individuals into a group consensus in a way that is fair.

Anyone familiar with a group trying to make a procurement decision knows this dynamic well: it is remarkably difficult to get to consensus. It is almost impossible to do so quickly. Too many cooks spoil the broth.

But there is hope.

Arrow focused on ranked preferences. In his setup of the problem, each individual took the list of options and put them in their preferred order.

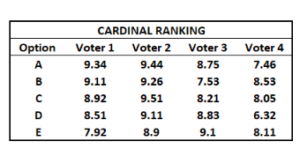

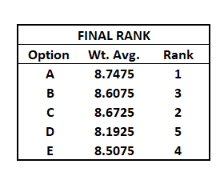

Cardinal voting means that each individual ranks the preferences, but they also attach a weight or value indicating their assessment of the perceived strength of each option.

“Psychological research has shown that cardinal ratings (on a numerical or Likert scale, for instance) are more valid and convey more information than ordinal rankings in measuring human opinion.”

The additional information of the assessments in cardinal ranking is what helps get to a fair consensus, faster. Ordinal ranking does not convey sufficient information to make this happen. So, when people in a purchasing group come to the table only with a list of the supplier proposals in order of their preference, we end up chasing our tails for weeks or months. People keep going back to the well to find new information to support their views. Rankings change and we’re back at it again. The best way out of this mess is to ask people to rank supplier proposals and to attach a score.

The next question is, how do we score?

The purchasing group is going to be made up of people from different backgrounds. The best way to score the proposals is on a question-by-question basis, in which we weight the questions and we assign weights to the individuals assessing the responses by question. Then, we roll up the results into a weighted average score.

This produces a group cardinal ranking of the supplier proposals that can anchor the buyer committee discussions. Ideally, there is a way to track comments that stakeholders may have on individual supplier answers, as well.

Do this and the buyer committee can speed up the time it takes to get to consensus.

This is what we have built at EdgeworthBox. We have a scoring functionality that permits groups to develop exactly this kind of weighted average scoring of supplier proposals. Even better, it can span multiple organizations, in the case of a procurement with more than one buyer, for example. Give us a shout. We love talking about this stuff.